Intro. [Recording date: February 1st, 2024.]

Russ Roberts: Today is February 1st, 2024, and my guest is economist and author Jeremy Weber. He is the author of Statistics for Public Policy: A Practical Guide to Being Mostly Right or At Least Respectably Wrong, which is the topic of our conversation. Jeremy, welcome to EconTalk.

Jeremy Weber: Thanks so much for having me. It’s a privilege.

1:00

Russ Roberts: How did you come to write this book?

Jeremy Weber: The book was in development in my head for probably more than a decade. It began after I spent four years working in the Federal Government, in a Federal statistic agency, the economic research service. And, that was a great place to be as a recent econ Ph.D. grad. And, it was a mix of and more academic research, very policy-oriented research, and generating real official federal government statistics, interacting with policy people.

Then I went into academia to teach statistics to policy students. And, the book I was using, the course that I inherited, very quickly I had the feeling I was more or less wasting students’ time, or at the very least there were huge gaps such that when they left my class, they weren’t going to be prepared to use any of this to help anyone in a practical setting.

And, from that point on, I started to accumulate notes on things that, if I were to write a book, I would want to include and things that I was now using to complement the statistics textbook to give my students more.

And then, in 2019, I spent a year and a half at the Council of Economic Advisers [CEA] and that was like a accelerator for this whole idea. Because, being engrossed in that environment, gave me many examples, many ideas. And then when I came back to the University of Pittsburgh and had a sabbatical, I said I’ve got to write this.

Russ Roberts: What is its purpose and who is the audience?

Jeremy Weber: Yeah. I’ll start with the audience.

The audience is broad, because frankly, whether it’s your first statistics class or your fifth, many of the issues are the same and neither the intro nor the advanced tends to do some things well. In particular, the communication of statistics to a non-academic audience, the integration of context and purpose of the moment or of the organization or of the audience into what you’re presenting–its significance for the situation at hand–we tend to not do that well, I think, at the undergraduate level. Or for Ph.D.s who are in their fifth year of econometrics. So it’s–the audience is broad.

3:50

Russ Roberts: So, it’s a very short book. There are a couple of equations, but there as–kind of like illustrations. And, what is spectacular about the book I would say–and I would recommend it to non-technical readers–what is very powerfully and well done about the book is giving the reader who is not an econometrics grad student, a very clear basic understanding of terms that you’ve heard all the time out in the world from journalists and occasionally a website you might visit that highlights academic research.

So, you’ll learn what a standard error is, you’ll learn what a confidence interval is. But, it’s not a statistics textbook in that sense.

However, those–that jargon–and other concepts that are used widely in statistics are very intimidating, I think, for non-academics.

And your book does an excellent job of making them accessible.

And then, of course it goes well beyond that. You’re trying to give people the flavor of how to use these concepts, use data that’s produced in all kinds of ranges of applications, calculation of means and correlations up through regression results that is more sophisticated. Statistical analysis. You’re going to give people insights in how to use them thoughtfully.

And, as you point out, no one teaches you how to do that in graduate school or in undergraduate if you take statistics. They’re taught more as, I would say, a cooking class. You learned to add certain ingredients together. If you want to make a cake, you need flour and you need eggs and you need this and a certain amount of heat. Whether it’s going to be a good cake or not is a different question. Whether that cake belongs to a certain kind of meal or a different meal, those are the things that practitioners learn if they’re lucky. But, you’re not taught those things.

And certainly people who don’t go to graduate school or don’t take a number of statistics classes in college will never, ever have any idea about it. So, I just want to recommend the book. If those kind of ideas appeal to you, you’ll enjoy this book and it will be useful to you. Is that a fair assessment?

Jeremy Weber: That’s a very fair assessment. You use the cooking example. I allude to kind of a vocational example in the book, where our statistics education, I would say teaches–it shows you: Here is the saw. And: Here are the parts of the saw. And, maybe we even, like, start it. And then, we put it down and we move on to another tool. Or, maybe we work with 10 different types of souped-up chainsaws, really sophisticated chainsaws. But, we’re just like, these are again, the features and parts of the chainsaw.

Actually going out and cutting down trees, like, do that–we don’t do that. That is–we don’t do that. We know people do that, but we’re not doing that.

And, that’s a bit of the gap I’m trying to fill.

7:07

Russ Roberts: And, the more standard metaphor you also use is the hammer. And, we may come back to this, but of course the standard, the cliché’d condemnation of mindless statistical education is: Once you have a hammer, everything looks like a nail. And, it’s really fun to run regressions and do statistical analyses once you understand how basic statistical packages work, without wondering whether it’s a good idea, what’s the implication of the analysis, how reliable is it, and does it answer questions as opposed to just provide ammunition for various armies in the policy battle?

And I think for me, that’s one of my concerns. We’ll come back and talk to it later I hope in terms of how we should think about the education in the practice of statistics. But, it’s such a fun tool. It’s a lot more fun than a hammer. It is more like a chainsaw. It’s noisy and attracts attention and people like to cut down trees. So, there is a certain danger to it that your book highlights–in a very polite way–but, I think there’s a danger to it. You can respond to that.

Jeremy Weber: Yeah. It is fun until it’s not.

And, when it’s not is when you are using this regression tool and you’ve maybe used it with the academic crowd; and that was fun. But then, you go to another crowd–the City Council crowd or some sort of more non-academic crowd–and you present it; and suddenly it’s not fun because nobody knows what you’re talking about and the conversation quickly moves on and you feel, like, out of place. Fish out of water. You’ve miscommunicated. People are confused. And now they’re ignoring you.

Russ Roberts: But of course, the flip side also occurs, right? The scientist in the white coat. And, in this case it’s the economist or policy analyst armed with Greek letters in their appendix. At least in their paper if not their physical one.

And, there’s an awe of these kinds of people: ‘And, obviously they’re smarter than I am and obviously they’re experts. Maybe I’m overly pessimistic here.’

A lot of times I feel like in those settings outside of academic life, there’s a lot of trust in the reliability of numbers produced with what I would call standard practice. And, once you follow the rules of standard practice–which means statistical significance, confidence intervals and so on, and you frame your work with those footnotes, then you’re credible.

And just simply because you’re in the arena and you’ve been trained accordingly, you’re a bit of a shaman. And, I think that’s a little bit dangerous.

As is the opposite: ‘Well, they’re obviously wrong. They are a bunch of academic eggheads and they don’t know what they’re talking about.’ So, I think there’s an interesting challenge there, I think, when we go out into the world.

Jeremy Weber: Yeah. You’re right. In certain environments there’s that deference, that credibility conferred because of the mathiness, because of the training, the aura. I agree: That is a case that does happen in certain environments.

10:60

Russ Roberts: Now, I argued in a recent episode that statistical analysis is used more for weaponry than truth-seeking in the political process. And, I think it was misunderstood by some listeners. I think it’s very useful to politicians to have data numbers and policy players. But I don’t think they’re so interested in the truth, and I wonder how your book would be perceived by them.

Jeremy Weber: Yeah. I agree with your assessment. Primarily weaponry, especially in the D.C. [Washington, D.C.] area.

But, if the weapons being picked up are actually real, understood measurements that accurately reflect an issue–they don’t reflect the full scope. They’re being used selectively. But if there’s good measurements out there and there are competing parties fighting, it means the party is going to pick up the most effective weapon that most appeals to the audience out there.

And so, if there are, in a way, better weapons out there that can be picked up, I think you have a greater tendency to some major problems being avoided or opportunities pursued.

And I’ll give you a concrete example. When I was in the White House, the commerce department was petitioned by some uranium mining producers for protection. They didn’t want imported uranium into the United States. The Commerce Department conducted an investigation, did a Report on the issue. They did their own–they did a survey. They presented some statistics in this Report that went to the President recommending restrictions on imports. Okay? You know.

So, Commerce Department, they’ve got their weapon. All right? And, CEA got involved–

Russ Roberts: The Council of Economic Advisers–

Jeremy Weber: That’s right. Council of Economic Advisers got involved.

I grabbed some other data. I did some analysis. I generated, you could say, another weapon that I thought was actually a better depiction or reflection of the economic reality and what was likely to happen under the Commerce [Department of Commerce] proposal.

All right. So, we got together Commerce, other agencies in the room, and in a way we had our battle. We picked up our weapons. I think we–at the end of the day–we ended up at a better place because I was able to pick up a weapon and there was this back-and-forth with the data. So, but, had CEA not been there, nobody or those reports that I relied on from the Energy Information Administration had that not been there, everybody would have bowed down to Commerce and they would have rolled right through, and the President would have said, we’ve got to import or restrict uranium imports so we can prop up these several producers out in Utah–at the expense of the nuclear power industry and electricity consumers.

Russ Roberts: Yeah. That’s a great example. In theory, the Council of Economic Advisers–and I think it to some extent plays its role as best as I can understand it–is more of a technocratic fact-checker in some dimension of advocacy by other agencies and in theory is somewhat unbiased.

In this case I assume the argument was that this was going to create a lot of jobs in Utah. Was it Utah?

Jeremy Weber: Yeah. There’s several places where uranium mining occurs. Utah is one of them. That’s where the companies–at least one of the companies that was filing the petition was located. You had the argument–there was a national security argument. There was a whole resiliency of the uranium supply chain argument. There was jobs argument, too. That’s right.

Russ Roberts: If I can ask, what was the key finding that you felt was at least somewhat decisive in derailing a strong impulse toward restrictions on imports?

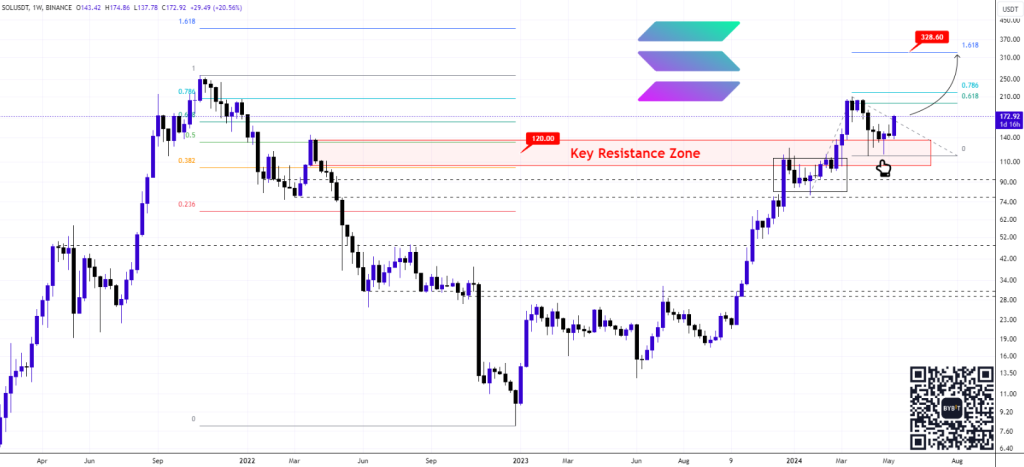

Jeremy Weber: The key finding was: Commerce Department had said at $55 a pound, we think the domestic uranium sector is going to produce the amount that we’re going to require domestic nuclear power producers to purchase from them. So, this is not going to increase domestic prices for uranium much. $55 is just a little bit above what the market price was at the time.

My analysis said: Unlikely. The price on the domestic market will be a lot higher. For the uranium sector in the United States to produce six million pounds of uranium–which is what the requirement was going to be, the buy-American requirement, so to speak–you are going to need a dramatically higher price. And, that price is either going to get passed on to consumers–especially in places with a lot of nuclear-generated electricity–or, the nuclear producers were going to eat it and it was going to push some of them over the edge, particularly in places like Michigan and Pennsylvania where there’s–and Ohio. Important states.

And, so my basic argument was: this restriction is going to call[?cause?] prices to increase a lot. And the key statistic–I did some more sophisticated stuff in the background, but I didn’t bring that in as the Game Plan A in the meeting. I brought in two figures–two graphs–that simply showed, look, in recent history, the price of uranium has been way above your price for several years and the domestic sector didn’t come anywhere close to producing what you’re saying they would produce now at a much lower price.

So, this simple descriptive statistic I think convinced everyone in the room except Commerce that there’s no way the domestic price is going to be $55 and six million pounds are going to come out of the ground. So, in the Decision Memo to the President, our estimate, CEA estimate of what the price is going to do, what it’s going to do to electricity prices and electricity consumers was in there. And, I don’t know what proportion of influence that had, but people who were familiar with the matter said it was really important that that point was made.

Russ Roberts: For economics majors out there, this was a debate about the elasticity of supply. A phrase that I don’t know if it’s been uttered more than a couple of times in the history of this program. Meaning how responsive is production to changes in price? And, if the answer is not very much, then you’re going to need a much larger price to make the market work effectively; and the demand for uranium once foreign supplies are unavailable is going to push the domestic price up much higher than $55.

And, that’s very nice. Now of course that as you point out–you had more sophisticated stuff in the appendix. But, the fundamental–often the facts can be persuasive or at least provocative [?] reconsider a position.

18:46

Russ Roberts: What are your thoughts on our profession generally and our ability to establish something like a truth on the basis of statistical analysis?

So, for example, what’s the effect of the minimum wage on employment among, say, low-skilled labor? The profession used to believe the answer was minimum wage is very bad for low-skilled labor. It would cause a lot of jobs to go away. In recent years, there’ve been a lot of thoughtful people who’ve made the opposite argument: it’s effects are either small or zero. There’s been pushback against that by other people saying actually that’s wrong: In the short run it might be true; in the long run, it’s big; or, you didn’t fully measure it correctly.

And, if I said to an economist: ‘What’s the effect of a, say, 25% increase or 15% increase in the minimum wage?’ it would depend on who you asked. And, that’s weird. If you ask a physicist what the effect of gravity is, they don’t argue about it. There’s a consensus. We don’t really have those kind of consences–I don’t know if that’s the right word–consensuses in economics, it seems to me. Do you agree?

Jeremy Weber: I agree. And, I think the key difference is that, as long as we’re in the earth realm, gravity is pretty contextless. It’s not context-dependent. Social settings are so varied, and so the situations in which we estimate these relationships are oftentimes conditioned by the moment in history, the place.

And, I’ll give you a concrete example. My subject area of expertise is energy and environment. I’ve done work on fossil fuel extraction, effects on communities. A big question that the literature was considering several years ago was if you have fracking–if you have natural gas drilling in an area–what happens to property values? Somebody looked at that question in Pennsylvania and found, well, for many homes it will be a negative effect. People are not going to want to live near this, particularly homes dependent on groundwater. Okay? That’s Pennsylvania.

I looked at Texas. I found housing prices go up quite a bit in the vicinity where the fracking took off. Well, the reason for the difference was–or primary reason–in Texas you tax natural gas wells as property. So, when you drill a well, the full value of that well enters the tax base. That’s like we just built a bunch of million dollar McMansions and now those people are paying taxes on those houses. That’s going to the local government. That’s going to the schools. It turns out in Texas then they lowered the property tax rate and so people’s tax bills were going down, the school was getting more revenue, and property values generally went up in the area.

That’s a very different finding than in Pennsylvania where they don’t tax. There’s no revenue generation for the school, no reduction in property taxes. Same basic phenomena of fracking, fundamentally different effects on this outcome because the context–the policy environment–was so different.

And, I think that’s just one illustration of how–are we raising the minimum wage in an area where the market wage is already pretty high and we’re just going to basically move it close to the market wage? Or, not?

So, I think part of the reason why it’s hard to come up with a consensus is because context matters; and that matters certainly for policy. That consideration of context is something that I emphasize in the book so much.

22:40

Russ Roberts: Of course, you would like to think that the fundamental market forces are the same. They may be, in, say, the case of minimum wage. And, people might disagree about what those are. That was, again, not so true I think in the past–say, 50 years ago–but is much more true, say, in the last 15 or 20 years.

But, I do think there is a feeling among younger economists and I would–Jeremy, I put you in that group relative to me. Just looking at you, I would say–

Jeremy Weber: I appreciate it–

Russ Roberts: No problem. I think there’s a concensus–not a consensus–there’s a flavor of recently-trained economist who says: ‘I don’t look at theory, like what theory says about what the minimum wage impact should be or is likely to be. I just look at the data. I just read the output from my statistical package, and I look for the truth, and whatever it says, that’s our best understanding of how the minimum wage affects low-skilled labor at this point in the areas I looked at it.’ And, I find that an untenable view, but I think I’m in the minority in the modern world. Is that true?

Jeremy Weber: No. I agree with your assessment that there is a tendency, culture shifting or it has shifted, where we just want to go right to the data and not do the heavy thinking beforehand about setting things up, in a way: What are we trying to answer? What is the general theory that we’re trying to test? And, we’re just going into the data too quickly.

And, one of my recommendations in the regression chapter is: never run a regression without a clear purpose for doing so. It is so easy to be led in strange places just by kind of meandering through the data. And, you know, we all know we’re not supposed to look for certain results, but that is so easy to do. You start getting a hunch: ‘Oh, this would be a great story if it works out this certain way.’ And, lo and behold, then you start looking for that story and you’re like, ‘Oh, it doesn’t work quite right here, but what if I subset the data this way?’ and suddenly the story emerges and then at the end of the day you’re, like, ‘Well, I can sell this story.’ Like, ‘This is coherent enough.’ But is it a manufactured story? And, I think that does happen more often than it probably should. [More to come, 25:17]