Intro. [Recording date: August 28, 2023.]

Russ Roberts: Today is August 28th, 2023, and my guest is Michael Munger of Duke University. He hosts the podcast, The Answer Is Transaction Costs. This is Mike’s 46th appearance on EconTalk. He was last here in June of 2023, talking about obedience to the unenforceable. Michael, welcome back to EconTalk.

Michael Munger: Thanks, Russ.

Russ Roberts: Our topic for today is an essay you wrote on the trolley problem and Adam Smith.

I want to add: We’re probably going to get into some serious themes related to death. Parents may wish to screen this episode before sharing it with children.

1:15

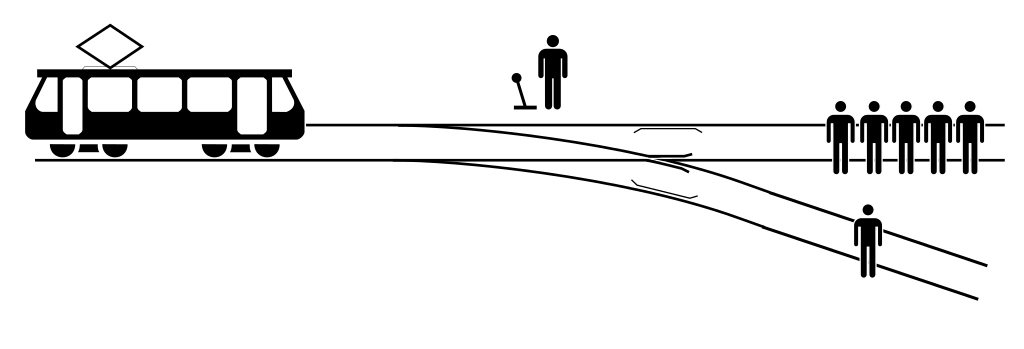

Russ Roberts: Let’s start with the trolley problem. Ages ago, back in 2015, we did an episode with Joshua Greene, Harvard University, that spent some time on this philosophical hypothetical. What is the trolley problem?

Michael Munger: The trolley problem raises the question of what Philippa Foot–the philosopher who first kind of raised the question in a systematic way, at least in this context–called the doctrine of double effect, or the difference between killing and allowing to die. And so, the both of those two doctrines are actually quite ancient.

And, interestingly, for economists, these tend to be–economists pretend that they’re utilitarians or consequentialists. And, that is, the greatest good for the greatest number. Now, there’s problems with that. We can’t add up utility. But, the origin of this was–well, suppose we have some rule that allows us to treat people as equals. And, in a democracy or in liberal theory, that’s not unfair. So, if we treat everyone as equals, then you can kind of add up lives or something like statistical lives saved.

So, it’s common for us to use cost-benefit analysis as if we could add up utility. So, that was one of the reasons that I got interested in this to begin with.

But, it’s an ancient problem, and it started probably with the Cesarean section. Now, the Cesarean section, as the name suggests, was–there is a child of Caesar that a woman is bearing, and the woman is sick, or at the time when the child is to be born, it’s a breach birth and you’re worried that both the mother and child are going to die. Should you remove–with all of the catastrophic cutting that that implies–should you remove the baby from the mother’s womb? Knowing, in 200 AD, that that means the mother dies for certain, but you might save the baby.

And so, the difficulty is that it seems like a weak Pareto-improvement. They’re both going to die, or maybe we can save the baby.

And so, the economist might say, ‘It’s a hard choice, but okay, let’s do that.’ Philippa Foot–

Russ Roberts: And, just to–you should say something about what you mean by a ‘weak Pareto-improvement.’

Michael Munger: So, the Pareto criterion that economists often use as a substitute for ethical reasoning is a way to say that: Suppose that everyone is better off–two states of the world, A and B, and everyone is better off. The weak version is, no one is worse off, and at least one person is better off.

Obviously, we have choice A, which is: both the mother and the baby die. Choice B is: the mother dies, but probably, we can save the baby. B is better than A, as horrible as that sounds. Now the difference is that in the case of the mother and the baby dying, we’re doing nothing to cause that. It just happens as a result of natural events.

In the case of the Cesarean section, we’re actively intervening to kill one of the parties.

So, you can see why, and those familiar with the trolley problem, can see why this is a version of the trolley problem.

Russ Roberts: And, on utilitarian grounds, the fact that the baby has a longer lifespan ahead of them than the mother, would justify it in the Benthamite calculus. Bentham, being the father of utilitarianism. Carry on.

Michael Munger: Well, and so the real problem was that this was Caesar’s baby and no one cared about the woman–so, it’s in a patriarchal society. But, yes, the best version you can give of it is what you said.

So, what Philippa Foot–and I went down–I was preparing for this podcast and I looked up Philippa Foot and started to read about her. She’s one of the most interesting people I’ve ever read about. I’ve probably spent 10 hours down the Philippa Foot rabbit hole this weekend. And, I blame you personally for that, Russ.

Russ Roberts: It’s okay. I’m going to double your fee for this. I guess, tenfold increase in your fee for this episode, Mike. So, you’ll end up better off.

Michael Munger: That makes it all worthwhile, thank you.

So, what Philippa Foot noted was that the problem of abortion often flips that calculus. So, particularly in the case of a difficult pregnancy that endangers the life of the mother, either both are going to die, or we’re going to intervene and abort the fetus and save the life of the mother.

Now, the problem is that, in the case of a difficult pregnancy where both might die, those are happening in the natural course of events. Or, we actively intervene and kill the fetus, with the hopes that it makes the mother better off, because otherwise, both of them would’ve died. If the mother dies, obviously, the baby dies.

And so, the difference is–and this is where she pointed this out first in an article in 1967, which was where she first posits what she called the tram problem. We have translated that into English, so it’s the trolley problem now. But, there’s a tram that is–well, let me read her version of it. So:

it may be supposed that a person is the driver of a runaway tram, which he can only steer from one narrow track onto another; five men are working on one track and one man on the other; anyone on the track he enters is bound to be killed. The question is why we would say, without hesitation, that the driver should steer for the less occupied track, while most of us would be appalled at the idea that the innocent man could be framed [‘would have been killed on purpose’–Munger].

So:

The doctrine of double effect offers us a way out of the difficulty, insisting that it is one thing to steer toward someone foreseeing that you will kill him and another to aim at his death as a part of your plan. In real life, it would hardly ever be certain that the man on the narrow track would be killed. Perhaps he might find [‘gains’–Munger] a foothold on the side of the tunnel and cling[s] on as the vehicle hurtled by. The driver of the tram does not then leap off and brain the man with a crowbar. [italics in original]

So, the point is that I have a choice between two alternatives which I did not create. And, in my little essay, I tried to identify the importance of this difference.

So, if you look at surveys of what are called the trolley problem, if I am on a runaway trolley, no brakes, no way to stop, and I’m heading towards a track that has one person who cannot escape and will be killed if I do nothing, or I can pull a switch and divert onto another track that will kill five people, but I can save the life of the one. Should I save the life of the one by acting? No one says that I should do that. No one says that I should divert and kill the five to save the one.

Now, what looks like almost the same thing, but is quite different: Suppose the tram is hurtling down a track and there’s five people on that track, or I can divert. If I divert, it will go down a track that will kill one person. Do I do that? Now, from a sort of economic consequentialist perspective, there’s no difference. But, there is a difference between my intention and my acting. So, the doctrine of double effect would say that any action that I take has two effects and I have to compare their consequences.

And Philippa Foot introduces this comparison, that she later expands on in a number of other, what she called, moral dilemmas. And, the moral dilemma is that we feel like there’s a difference in terms of moral agency. And, what moral agency means is that if I allow someone to die, it is very different from me actively killing them.

9:47

Russ Roberts: So, first of all, I think it’s fascinating. I’ve never read the original article that you’re reading from. The fact that she notes the possibility of the one person leaping aside, I think is one of the reasons that some of these hypotheticals are foolish–not so much as a conversation topic; I don’t find them foolish at all, I find them very interesting. But, when surveys are done and certain proportions of people answer one way or the other, people make big, grand conclusions about the human brain or about morality. And, I think it’s very hard for most human beings answering a survey to ignore the real-world aspects of the hypothetical.

So, in all the hypotheticals in all the survey versions, you’re told: The person will die with certainty. Or: They can’t get out of the way. Right? And, there’s a third version, of course, which I think we’ve mentioned on the program at some point, where you have a choice. You’re on a footbridge, and you have a chance to push a person over the footbridge to stop the train that’s about to kill five people. And, I think those survey results are very problematic, because I don’t think you can ignore the possibility that other things will happen. You could assume that, but I find that a little bit troubling. And–

Michael Munger: Let me say–

Russ Roberts: Go ahead.

Michael Munger: Please, go ahead.

Russ Roberts: And, I think I’ve mentioned the program before, when I posed this problem to my children, one of my kids–who at that point was, like, 10 years old–said, ‘Well, I wouldn’t push the heavy person over the footbridge railing and save the others.’ I said, ‘Why not?’ He said, ‘Because he might fight with me, and then I wouldn’t be able to push him over, and I might get pushed over.’ And, I thought, ‘Well, that’s interesting. You’re supposed to ignore that.’ Then I realized: But most people probably don’t. The idea of grappling with another human being–forget the whole issue here of causing the thing to happen versus allowing it to happen–the whole idea that the outcome is uncertain is just assumed away. And, I think that makes a lot of the survey results on these problems problematic.

I find the same issue with Peter Singer’s example of ruining your shoes to save the drowning child. And therefore–because you should ruin your shoes to save a drowning child–you should send the value of your shoes to Africa for malaria bed nets, where malaria is prevalent, to save lives. As if it’s a certainty, that you can save lives by spending money. Which, tragically, is not true.

And–anyway, my only point is that I want to emphasize that in these hypotheticals, I think it’s more interesting to talk about them than to judge the survey results.

Michael Munger: Right? This brings us to three things. One is to Adam Smith–which was the reason that I wrote my essay, and probably the connection to EconTalk, in the sense that I think it’s interesting. And, I think if you read Adam Smith, the hypothetical about sacrificing one’s little finger or losing one’s little finger, compared to 100 million people in China dying in an earthquake, that’s a really interesting part of The Theory of Moral Sentiments.

But, let me suggest this. This afternoon–classes are starting today at Duke–I teach the first class in the PPE gateway–Philosophy, Politics, and Economics. And, I’m going to ask them how many have seen the movie, Oppenheimer. And probably a fair number of them will have seen the movie, Oppenheimer. And, that was not hypothetical. The United States faced two choices. It could try to develop an atomic weapon that it could use to end the war in the Pacific with Japan, or it could pursue a plan that they had come up with, called Operation Downfall.

And, Operation Downfall was a systematic military ground attack of the Japanese mainland. And interestingly, after the war, the Japanese defense plan–and it was called Operation Ketsu-Go. Operation Ketsu-Go was the fortification of much of Japan in a way that Okinawa had been fortified. And, the plan that–this is the Japanese estimate of their casualties–at least 2 million soldiers and as many as 50 million civilians would die if Operation KetsuGo were triggered. And, again, this is the Japanese view. It’s possible that it was somewhat larger, somewhat smaller, but the Japanese themselves had decided that that was what they were going to do.

The United States had lost 25,000 dead on Okinawa. Nearly 100,000 had been wounded or put out of action. The Japanese had lost 200,000 on Okinawa, and that was not the Japanese mainland.

So, the United States faced the choice: Are we going to develop and drop atomic weapons on cities–defenseless cities–and intentionally kill women and children? Or are we going to pursue military objectives? And, as a side-consequence–as an unavoidable side consequence–that, because the enemy is resisting, is going to result in 10 times as many casualties?

Now, it’s the trolley problem. Because, it is your intention to kill women and children, in the case of the atomic weapon. You are actively saying, ‘We are going to drop a bomb onto an unsuspecting defenseless city, and the result is going to be catastrophic deaths. And, that is literally our intention. That’s what we’re going for.’ Or, we can take another course of action that is within the tradition of military ethics.

And so, there is a–I think the usual hypothetical about should the United States have dropped the atomic bomb, is easy, because there are far fewer casualties in the case of the atomic bomb, even in Japan. Certainly, there’s far fewer casualties to the United States. The estimates for the U.S. casualties were up to a million–up to a million U.S. soldiers might die in the taking of the Japanese mainland. The Japanese strategy was to–and they may have been right–that the United States would give up. It just wasn’t worth it. At some point, after two or three more years, if they continued to resist, the United States would just give up.

And so, the question is: Was the United States morally justified in using atomic weapons when it involves killing? Not ‘allowing to die.’ When it allows killing, not allowing to die.

And so, the reason for that extended sort of claim–and let me raise one more–is–and whoever is playing the EconTalk drinking game may have to chug at this point, because you’ve not mentioned driverless cars or autonomous cars in quite a while. It used to be a main part of the show.

But, if you are programming an autonomous car and there’s a school bus ahead of you that you’re going to hit, or you can go up onto the sidewalk and hit a woman and a baby carriage, your choices are just to let the momentum of the car take you into the bus, or go up on the sidewalk and kill the woman and the baby carriage. That’s not a hypothetical. That’s literally things that we have to face.

So, the reason to do these hypotheticals is to train the minds of the young leaders in philosophy, politics, and economics, because they are, Russ, the elite. They are the best. So, the PPE [Philosophy, Politics, and Economics] students are clearly the smartest of all students, because that is the major everyone should pursue. We have to train them on toy problems, so that they could deal with the non-hypotheticals that they will have to deal with as tomorrow’s leaders.

18:28

Russ Roberts: In the TV series, The Good Place,–a show I enjoyed immensely–one of the characters, Chidi, C-H-I-D-I, Chidi is a philosopher in the show. And he is forced–through a snap of the fingers, he finds himself in a trolley barreling down on some workers. There’s a track: he can divert to kill one worker. And, he’s got about 10 seconds to make the decision. And it’s highly entertaining and quite interesting.

Michael Munger: No spoiler alerts, but it was highly entertaining.

Russ Roberts: Yeah, I will not tell you what happens. I’m not sure–

Michael Munger: But, we will link to it. It’s important.

Russ Roberts: We may link to a clip from that episode, and you can say whether it’s your cup of tea, if you haven’t seen it. Later on in this series–this I will not mention–there is an actual answer to the trolley problem, which I found quite provocative and interesting.

But, in real life, you often have to make these decisions in real time. You don’t have the luxury of sitting around in a seminar room. And, certainly, Harry Truman didn’t have that luxury when deciding to drop the atomic bomb. It was not, evidently–he claims, I think–it was not a hard moral decision for him.

Michael Munger: He cared about Americans. He’s the American President.

Russ Roberts: And, I would just mention that a quick Google search suggests that about 200,000 people died in the bombings of Hiroshima and Nagasaki. Of course, many more had health problems related to it. But, 100,000 people approximately died in a single night in the firebombing at Tokyo–

Russ Roberts: which was a horrific tragedy.

Michael Munger: And, intentional. It was an intentional firebombing.

Russ Roberts: Yep.

Michael Munger: They waited until the weather was right to have enough wind to create the firestorm.

Russ Roberts: And–again, I’m not sure that was a serious moral dilemma for the people who pulled the trigger on that decision. But, I’m only remarking on that because–and of course, neither of those casualty numbers were known in advance. Nobody knew exactly how many people would die in either them. But, for some reason, the nuclear attacks are treated differently from the firebombing.

And it’s an example of why, many times in these hypotheticals, there are strange, intangible things that the answer hinges on for many people.

In the case of the trolley problem, as you point out, for many people, it’s the causing versus allowing to happen. People feel very differently about that. Why that is: longer conversation, another time, probably.

But, in the case of the atomic bomb versus firebombing, for some reason, the atomic bomb is put in its own unique box. And, you could argue it’s because it allows an unimaginable destruction in its aftermath in the future, or simply because it has a technological aspect to it that is different from bombs of which human beings had been using for some time.

But, I thought it was unintentional–unintentionally ironic on your part that you mentioned that the atomic bomb was like a different kind of warfare. Not so much. The standard of what was acceptable after the bombing of London–which I think was an accident by Hitler–the bombing of civilian targets, opened up, changed the norms. Or at least excused a different set of norms. And, we got the firebombing of Tokyo.

There’s also of course the firebombing of Dresden. I’m looking that up now–

Russ Roberts: The firebombing–

Hmm?–

Russ Roberts: Only, quote, “only 25,000”. But horrific, destructive: one night of horror, terror and destruction for civilians. You can argue whether they were innocent or not; there’s a whole bunch of moral lines you could draw. Certainly, I would argue the children were innocent, but you could argue more widely depending on your perspective.

23:13

Russ Roberts: But, let’s move on to Adam Smith, unless you want to respond to anything I said. We are in the 300th anniversary of the birth of Smith, so it’s kind of nice that we’re talking about him. You quickly summarized it, but talk about it again, and let’s talk about the dilemma that Smith poses.

Michael Munger: Well, it was actually Adam Smith that was the point of my essay. Because I claimed that Adam Smith had, ‘solved’–and perhaps we should put that in quotation marks–Adam Smith suggests a way that our intuition about what later has come to be called the trolley problem might work.

So, on page 136 of the Liberty Fund edition of The Theory of Moral Sentiments–and I should say, I first encountered this in your marvelous six-part discussion with Dan Klein a long time ago, now. I think it was 2009, that I really recommend to everyone this discussion of the brilliance of the way that Smith kind of turns the tables. Because usually, the story is–and I think it is worth reading, just to make sure we have the context. So, again, this is on page 136 in Section Three, Chapter Three.

Let us suppose that the great empire of China, with all its myriads of inhabitants, was suddenly swallowed up by an earthquake, and let us consider how a man of humanity in Europe, who had no sort of connexion with that part of the world, would be affected upon receiving intelligence of this dreadful calamity.

So, this is pretty much a standard person of humanity, not someone who is inured to human suffering. Someone who actually cares about other people. He would, I imagine, first of all:

express very strongly his sorrow for the misfortune of that unhappy people, he would make many melancholy reflections upon the precariousness of human life, and the vanity of all the labours of man, which could thus be annihilated in a moment. He would too, perhaps, if he was a man of speculation, enter into many reasonings concerning the effects which this disaster might produce upon the commerce of Europe, and the trade and business of the world in general. And when all this fine philosophy was over, when all these humane sentiments had been once fairly expressed, he would pursue his business or his pleasure, take his repose or his diversion, with the same ease and tranquillity, as if no such accident had happened. The most frivolous disaster which could befal himself would occasion a more real disturbance. If he was to lose his little finger to-morrow, he would not sleep to-night; but, provided he never saw them, he will snore with the most profound security over the ruin of a hundred millions of his brethren, and the destruction of that immense multitude seems plainly an object less interesting to him, than this paltry misfortune of his own.

Now, usually people stop there and say, ‘Adam Smith says we’re so selfish.’ There’s several interesting things about that. He calls the Chinese “our brethren”–which, in 1759 in England, racism is pretty rampant and unquestioned. It’s not clear that that is–Smith was an egalitarian. There’s a thoroughgoing egalitarian.

Russ Roberts: Yep.

Michael Munger: But, he’s actually using the fact that they are our brethren and we still don’t really care, as part of his point. And if you stop there, it makes you think, ‘Boy, people are just rotten, selfish pieces of work.’

Russ Roberts: Yeah, and it’s a beautiful hypothetical, because I think most of us would concede that it’s true. As unpleasant and unappealing it is to believe that human beings are like this, most of us read news all the time about catastrophic things happening to people far away. Where in his day, the news would be the equivalent of watching a supernova, an event that would have occurred long ago in the past. By the time you got news of that earthquake in China, it would have happened, presumably, a week at least beforehand. Today, we watch it live, and we still sleep like babies at night. A strange expression, actually, ‘sleeping like a baby.’ It means to sleep well. Most babies don’t sleep so well; but whatever.

So, I think most of us–and I challenge all of you listening–to ask yourself, how many catastrophic things we just read about–many of us watched or read about the tragedy in Hawaii, a horrible, horrible loss of life of, for many people, their own country-, fellow country-members. And yet, they slept fine.

And yet, I think Smith is correct, that if we knew we were going to have surgery tomorrow, even minor surgery–so-called minor surgery, which, of course, is surgery that happens to someone else: there is no such thing as minor surgery for ourselves–we’d sleep badly. We’d be in trouble.

Michael Munger: This is disfiguring. You’re going to lose your little finger. They’re going to cut off part of your hand.

Russ Roberts: And it’s painful, and it’s uncertain about the real full consequences–

Michael Munger: It’s awful, it’s awful–

Russ Roberts: coming back to the ability to talk about hypotheticals. But, let’s say, try to pretend that it’s only your little finger. It’s still your little finger, and it’s hard–

Michael Munger: And the power is that it’s your little finger; that he’s probably right.

Russ Roberts: Yeah.

Michael Munger: I would be more upset about the prospective loss of my little finger than I would the deaths of many people that I don’t know, that I have no personal connection with.

Russ Roberts: At least, in the psychological impact on our day-to-day equanimity–

Russ Roberts: I mean, that’s what Smith is literally saying.

Michael Munger: He’s talking about the way that we live our lives. So, I would think–and I would go for a walk, in fact. I might even cry a little. I would be upset: ‘That’s just so awful that this has happened.’ And, then, I’d have dinner.

29:49

Russ Roberts: Yeah. So, carry on, though. That is very important: that that is not the end of Smith’s discussion, but it is often where it is ended when other people write about it. But, he goes on.

Michael Munger: Well, and it is a fair place to end, in terms of an outline. So, what I read, full stop, that’s an important point. We have now set one important standard.

Then, he continues–and he’s not a big guy on paragraphs, so it’s still the same paragraph:

To prevent, therefore, this paltry misfortune to himself, would a man of humanity be willing to sacrifice the lives of a hundred millions of his brethren, provided he had never seen them? Human nature startles with horror at the thought, and the world, in its greatest depravity and corruption, never produced such a villain as could be capable of entertaining it.

Now, I think that’s not right. But, there are sociopaths who might very well consider that. Or, like, the Joker on Batman–

Russ Roberts: Yeah–

Michael Munger: But, that’s why he’s so horrible, is that there’s just wanton–or in No Country for Old Men, the sort of chaos figure. He’s so horrible precisely because he would consider it.

Russ Roberts: Meaning, a person who would sacrifice the 100 million foreigners to save one’s own little finger–which didn’t come out in that reading, by the way. I’m not sure why. You said “just to sacrifice,” you didn’t say “to save the little finger.” Did you skip ahead, or–

Michael Munger: No, I continued.

Russ Roberts: Okay, carry on.

Michael Munger: But, let me say it again, because it wasn’t clear:

To prevent, therefore, this paltry misfortune to himself

That’s: Save the little finger:

would a man of humanity be willing to sacrifice the lives of a hundred millions of his brethren, provided he had never seen them? Human nature startles with horror at the thought, and the world, in its greatest depravity and corruption, never produced such a villain as could be capable of entertaining it. But what makes this difference? When our passive feelings are almost always so sordid and so selfish, how comes it that our active principles should often be so generous and so noble? When we are always so much more deeply affected by whatever concerns ourselves, than by whatever concerns other men; what is it which prompts the generous, upon all occasions, and the mean upon many, to sacrifice their own interests to the greater interests of others?

Now, there’s more. There’s more to be said. And, he says a couple more important things.

Russ Roberts: Yeah, hang on. I want you to read that part, too. But, just to summarize here, what he’s saying is that: you’ve told me already you care about your little finger more than you do about the death of 100 million, because it keeps you up at night, and the other thing doesn’t. And yet, when you have a chance to act and save your little finger–which you care more about according to your emotional response–you won’t do it.

And that, to me, is the–if he’d been writing the book in 2023, he might have started, instead of being on 136 [i.e., ‘page 136’–Econlib Ed.] –I know there’s some preamble in the Liberty Fund edition, but it’s not the opening. It would have been a great opening few pages. Instead, it opens much more diffidently and in a more challenging way to follow. But, that is essentially the setup for The Theory of Moral Sentiments.

I just want to say, by the way, Mike, that I was in Scotland a week and a half ago. And, I was in Edinburgh for the first time, and I was able to go to Adam Smith’s house, Panmure House, where he spent the last 12 years of his life living with his mom. And, in the front of that, as you enter that building, there is a copy of The Theory of Moral Sentiments open there. And, it comes with a subtitle I had not seen. It is: The Theory of Moral Sentiments, OR, An Essay Towards an Analysis of the Principles by which Men Naturally Judge, Concerning the Conduct and Character, First of Their Neighbors, and Afterwards, of Themselves.

Now, I mention that only because it’s a very useful bit of verbiage to help you understand what The Theory of Moral Sentiments is really about, given that we don’t use the phrase ‘moral sentiments’ in the way today that Smith did.

But, that’s what it’s about: it’s about judging the character and actions of your neighbors, and of yourself.

And so, what he’s saying is that: ‘Okay, this is who we are. We don’t care much about others. We care more about ourselves. We are self-interested. But we are not selfish.’ We do not kill others–most of us–to save a small amount of ourself.

And that’s a startling and bold claim, which I also believe is true.

Michael Munger: I have an embarrassing admission. And, that is, because I am so much more of a fanboy of you than you are of yourself–which is as should be. Dan Klein read the subtitle that you just gave in your six-part EconTalk about it. Which of course, you wouldn’t know. This is like somebody goes up to somebody from the Eagles, and, ‘How did you write these lyrics?’ ‘I have no idea. I don’t remember that.’ But, the fanboy has memorized them, and expects you to have memorized all this. You have seen it before. At least, you’ve heard it.

Russ Roberts: Oh.

Michael Munger: But, that was 2009.

Russ Roberts: 2009. But, this confirms Adam Mastroianni’s claim, that the brain is not connected to the ears, episode of a couple of weeks ago.

Michael Munger: You said you had not read it before. Actually, technically, you have saved yourself. You would’ve heard it.

Russ Roberts: But, I’d heard it.

Russ Roberts: It’s embarrassing. Okay.

Michael Munger: Because Dan, unsurprisingly, thought it was important: That–that subtitle is important–to understanding it.

So, before I continue to give Smith’s explanation: In my essay, I say, Adam Smith, if he had had the opportunity, would have posed the question in the language of the trolley problem. [More to come, 36:25]